TL;DR: Most Web3 projects do not fail because of a hack. They fail because of the humans behind them. A Crypto Social Audit is the missing layer of security that evaluates off-code risks and signs of on-chain manipulation. While a security auditor checks the smart contract, we assess product market fit, development progress, predatory VC vestings, Sybil/Bot wallets, and inorganic followers or activity. This guide explains how we identify these risks and summarize them into simple metrics and a proprietary OG Score (Trust Score) to help you avoid scams and market manipulation.

Let’s be honest for a second. You and I have seen this happen more times than we can count: a project gets a 'gold standard' audit, lists on Tier 1 exchanges, and boasts flawless smart contracts. On paper, it looks perfect. Then, overnight, the token price drops 90% or goes to zero. This proves a hard truth in Web3: technical security is not the same as financial safety. We need to stop looking only at the code and start looking at the people, the intent signals, and the math behind the curtain.

So, who is responsible? Is it the influencers, predatory tokenomics, market makers, or an unmarketable idea? This is exactly where technical reviews fail. Our Crypto Social Audit approach fills this gap by evaluating off-chain risks, examining team transparency, product-market fit, competitive context, and community signals to identify the red flags that code audits simply cannot see!

The $5.5 Billion Wipeout Overnight

For those who do not remember the case, it is worth revisiting the timeline. Mantra is a Layer 1 RWA Blockchain network that launched in 2020 during the DeFi narrative.

On 13 April 2025, $OM experienced a rapid collapse of roughly 92% in a short window, falling from around $6.37 to below $0.40. The move erased an estimated $5.5 billion in market value and pushed the project’s market capitalization below $600 million from $6B+.

The team publicly attributed the event to centralized exchange dynamics and forced liquidations, while stating there were no team sales during the period. Regardless of intent, the case shows why “audited code” does not protect users from market structure risk. If OGAudit had launched and crypto OG onboarding had started earlier and a social audit had been conducted in late 2024, it would have highlighted valuation concerns, tested whether the L1 or RWA narrative was supported by observable demand.

How could this happen so easily?

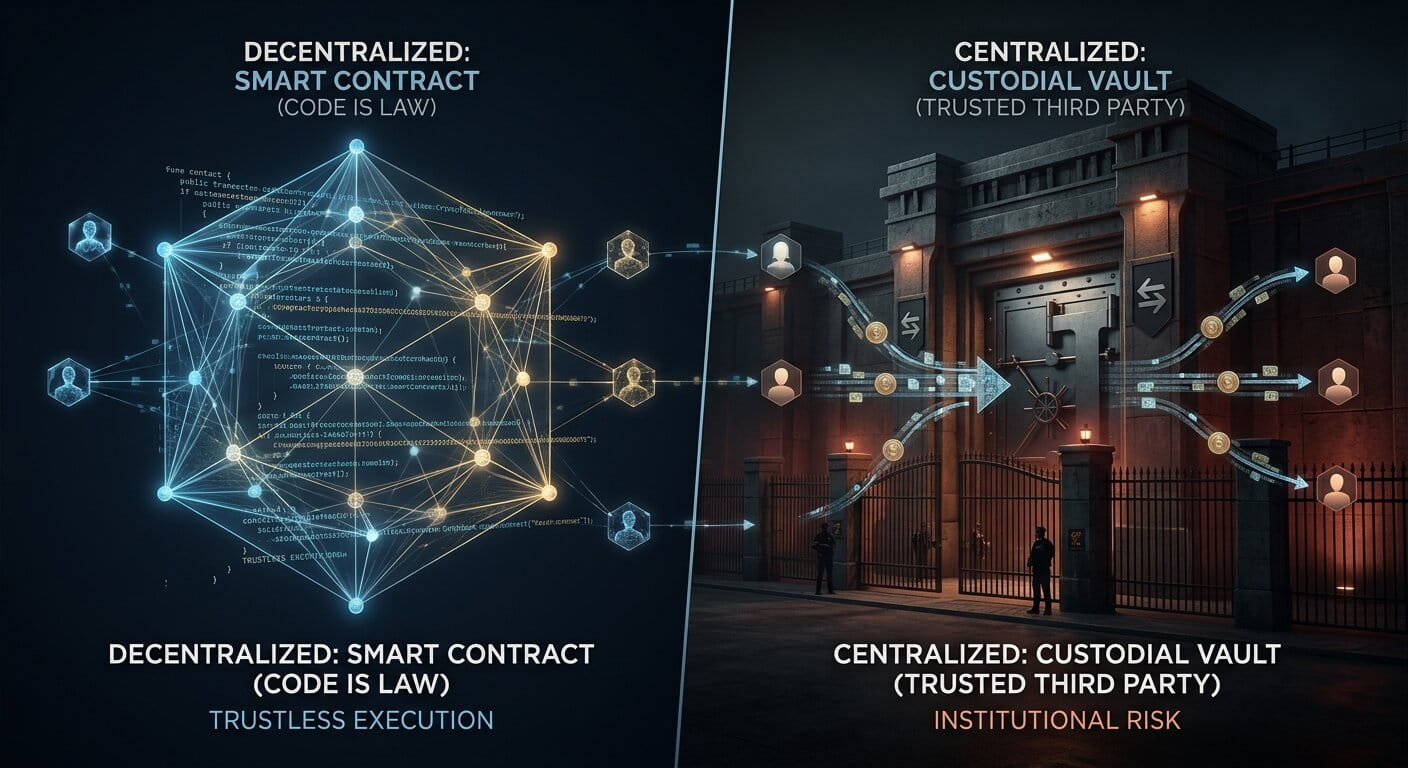

It happens because code audits check the machine, but they do not check the driver. A KYC review may confirm that a claimed identity exists, but it does not guarantee ethical behavior, financial integrity, or fair market conduct. Many Web3 corporations and foundations are not assessed beyond the code they deploy, leaving major gaps around governance, incentives, and execution.

Even when teams, exchanges, and market makers operate from regulated countries, they may still fall outside full, consistent oversight. In practice, decentralized and permissionless access are sometimes treated as a shield for avoiding accountability. Limited legal clarity, unclear jurisdiction, and weak deterrence for market manipulation can enable repeated abuse. As a result, retail participants often have little protection against market maker manipulations, poorly designed tokenomics, or DAO structures that appear to offer shareholder-like rights while keeping real decision-making power concentrated.

While these risks can exist even in legally structured projects that ship real products or services, they are significantly higher for malicious or anonymous projects designed as cover schemes from the outset. Such teams can halt services overnight, shut down communication channels, remove the trading liquidity, and walk away without consequences.

Let’s clarify how the OGAudit community addresses these structural issues and conducts a Crypto Social Audit, ensuring everything is aligned and key risks are identified.

The 3 Pillars of a Social Audit

In this article, we focus on three high-signal pillars. When I evaluate a crypto project, I do not focus on vanity metrics such as social media follower counts, visual charts, or heavily promoted marketing campaigns. Based on lessons learned over six years of experience in the crypto space, I apply a methodology that is effective across most crypto categories. Below is how I assess three core signals of legitimacy and healthy growth as a crypto social auditor.

1. Team Identity & Track Record

Are the founders anonymous? In 2026, anon teams are a major risk signal unless they have a strong, verifiable track record. If an anonymous team fails or acts in bad faith, they can disappear and reappear under a new name with little consequence. That is why OGAudit highlights founder identity when it is available. Before moving to the next pillars, here is what we check first.

The Check:

- Identity: I analyze the team members’ profiles and history on LinkedIn and X (Twitter). This process is often time consuming, especially when this information is not disclosed on the project’s website. The objective is to determine whether an account represents a real individual with a consistent professional background or a fabricated profile.

- Reputation Search: I check the tone of their affiliations and run a quick search on Reddit and X for their names alongside keywords such as “scam,” “rug pull,” or “failed” to assess whether there are negative community feedback posts and validate my findings. I also look at who is supporting them; are they backed by reputable figures or known shillers(influencers who promote anything for a few hundred bucks)? I also look for genuine endorsements and recommendations from other industry professionals (not paid influencers). In addition, I check real expert reviews on OGAudit to gain a clearer understanding of actual community sentiment.

- Digital Footprint: I check when the website domain. A project claiming "years of experience" with a domain registered last month is a lie. Because when you have an idea, the first step is to register the domain.

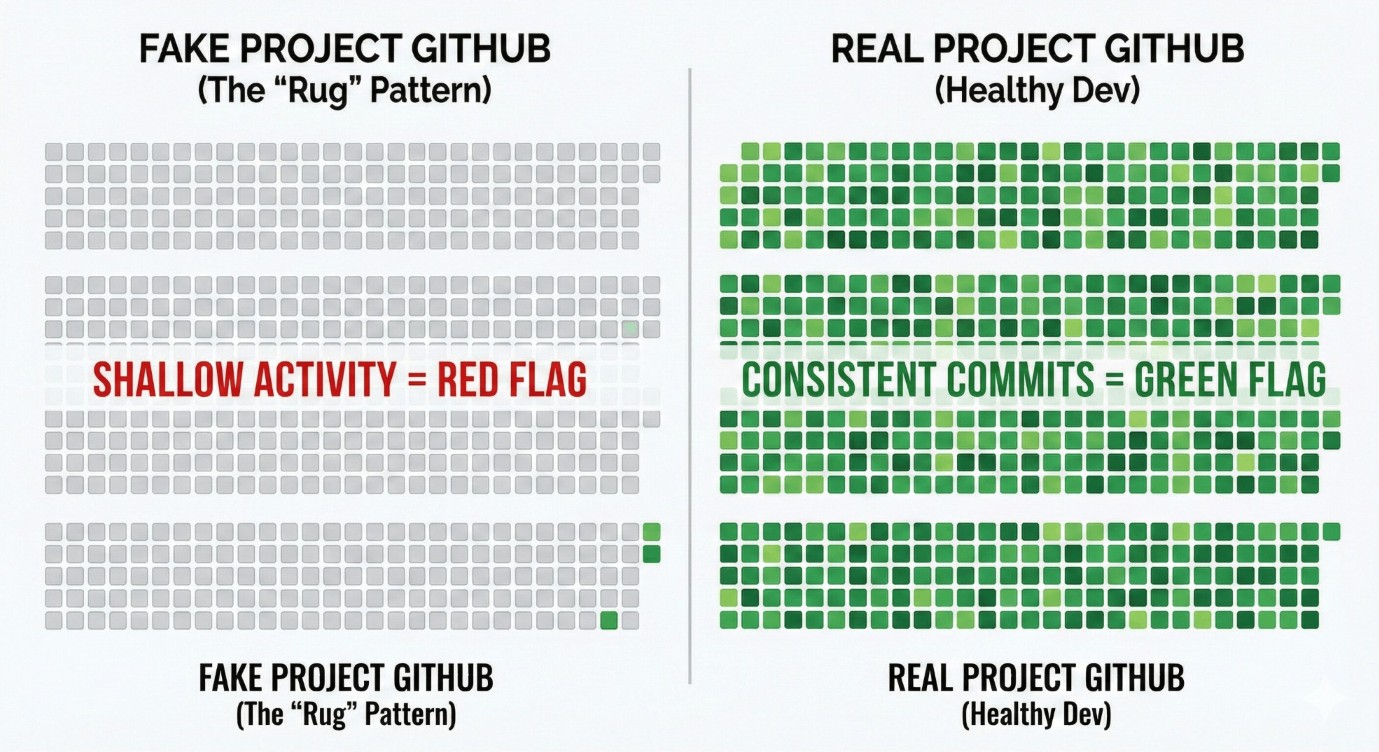

- Work History (GitHub): I look at the work that has actually been done. I check the GitHub repository and, more importantly, the commit history. Have they been coding consistently for a long time, or did the repository just pop up a few days ago with copied code?

Red Flags: A domain registered only a couple of months ago, combined with a shallow GitHub (limited or no code updates) and a shiny website that offers vague promises but no clear, working mechanics. Sparse commit history does not always indicate fake development, but it can suggest an uncoordinated effort, especially if the project is not run by a single developer.

However, team identity legitimacy alone does not solve the problem. Fake profiles still exist, and even real individuals can act in bad faith. In other cases, teams may be genuine with good intent but lack the experience, understanding, or practical awareness required to build and scale a company, remaining disconnected from market realities. We have also seen 'doxxed' founders walk away just as easily when there is no real legal or financial accountability.

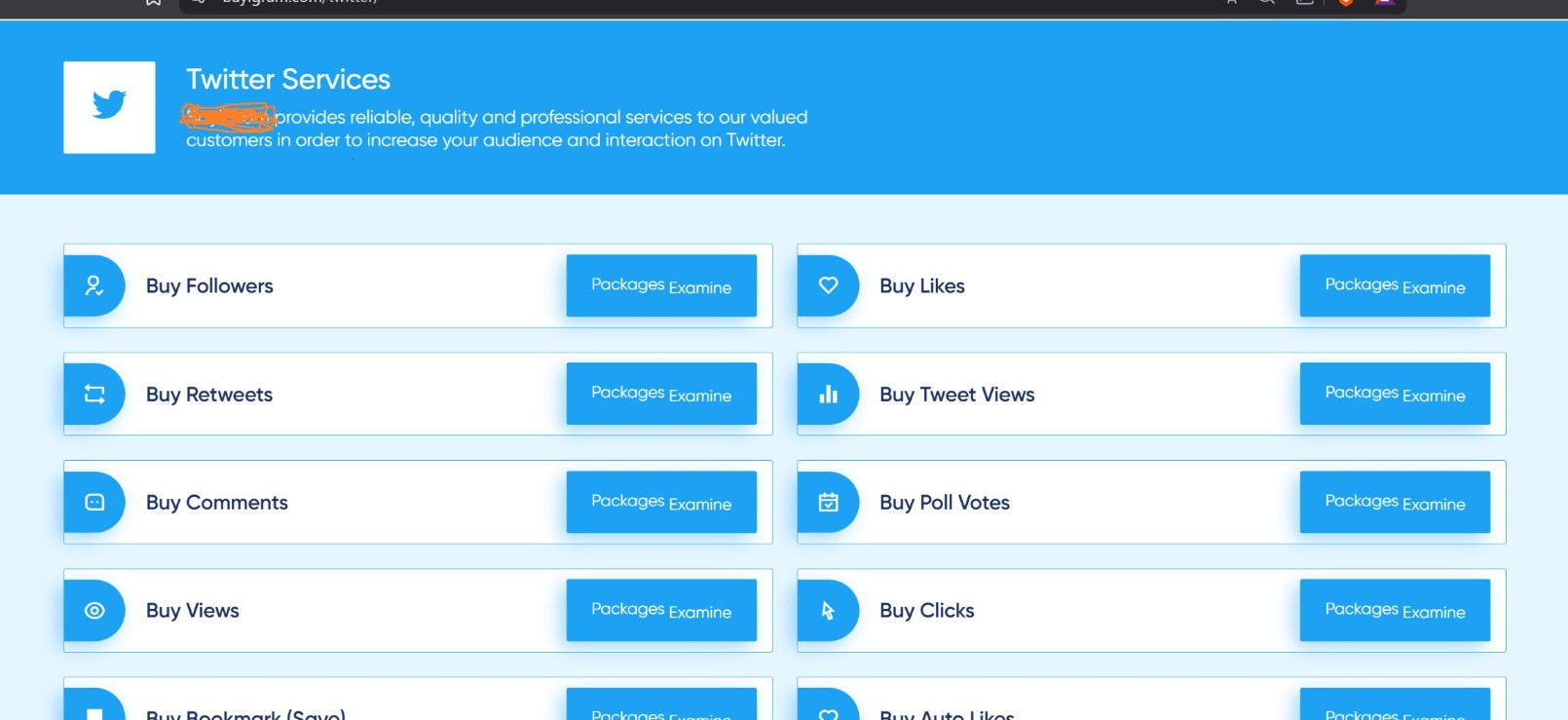

2. Community Health (Organic vs. Bots)

You can buy ~100,000 Telegram members or X (Twitter) followers instantly for just $1,000, but you cannot buy genuine conversation and real users. Today, teams do not just buy followers; they pay for "engagement farms" to artificially boost likes and replies to trick the algorithms. Even worse, some projects artificially inflate their active user and token holder counts by manipulating on chain data, creating thousands of bot wallets (Sybil wallets) to fake adoption. This 'wallet farming' makes it look like there are thousands of users, but in reality, it is just one developer moving tokens in a circle to trick people like you and me.

The Check:

- The Chat Logs: I scroll through the Discord and Telegram history. Are real people asking specific questions about the product roadmap?

- Support & Criticism: I assess how the team responds to pressure and negative feedback. Do they address difficult questions with honesty, or do they ban users and remove critical comments? On X, replies to posts cannot be deleted, which makes this behavior easier to observe. Reviewing recent posts and checking hidden replies often reveals whether the team is ignoring legitimate grievances while responding only to hype driven comments.

- The "Millions of Users" Lie: I verify their claims with hard data. If a Web3 app claims "millions of active users," I check their website traffic using tools like Semrush and Ahrefs. If they claim high transaction volume or liquidity, I check the Blockchain Explorer for that specific chain. Do the numbers match the advertisement? I also use DeFi analytics platforms such as DefiLlama, along with more advanced on-chain tools like Arkham and Bubblemaps, to analyze token distribution and actual network activity, and to estimate the proportion of developer controlled or insider wallets.

Red Flags: A fixed and repetitive engagement pattern, such as every post receiving the same number of likes and retweets, for example 300 likes and 200 retweets accompanied by bot generated comments. A Discord server or Telegram group with 50,000 members but only a handful of users online, or chats dominated by templated messages such as “Great project sir” or “When moon?” spam. Claims of large scale adoption paired with fewer than 1,000 monthly website visitors are another clear warning sign. If on-chain data shows thousands of transactions that appear to be wash trading or automated activity designed solely to inflate volume, this should be treated as an immediate failure signal.

3. Promise vs. Reality (Product & Market Fit)

This is where most teams fail, whether due to poor execution or because they are deliberately well crafted scams from the start. Bad actors often produce AI generated whitepapers filled with confusing jargon and excessive technical language to distract reviewers from a basic reality: there is no clear value proposition and no marketable product. In other cases, well intentioned founders operate under the assumption that “if we build it, users will come,” while overlooking market demand and the difficulty of becoming a revenue generating project. Many founders ignore the fact that success requires skills, resources, and execution, not just an appealing idea. To me a healthy Web3 project must be marketable, sustainable, and solve a real problem. Once I determine that a team is addressing a legitimate issue, I then focus on verifying whether their claims align with reality. Let me explain how I assess and what I do next.

The Check:

The Check:

- The Whitepaper Stress Test: I start with the whitepaper (or the litepaper). Does it clearly explain the problem and the solution, or is it a word salad of "revolutionary" buzzwords generated by ChatGPT or Gemini? I look for a clear path to revenue and long-term sustainability, not just a mechanism to sell tokens.

- Market Valuation & Competitors: I estimate the project's true market value by comparing it to established competitors. Sometimes the team markets the same open source project with shiny UI/UX and pushes you to think it has a unique value proposition. While these can be difficult to look into for casual investors, my experience allows me to spot valuation gaps in seconds. If a new project with no users is valued higher than a working industry leader, something is definitely wrong.

- Metric Verification: I compare the promises made in the whitepaper against actual performance metrics. Do the website visitor stats, organic social engagement, and on-chain activity align with their claims of "massive adoption"?

- Narrative Hopping: I watch out for teams that pivot to match the current dominant narrative. When "AI" was the trend, countless projects added "AI" to their names despite having no related technology. A project should have a core identity, not just a trending hashtag.

- Financial Health & Tokenomics: I question how the project is funded. Can they sustain development without dumping tokens on the market? I check if backers and the team have long-term vesting schedules to prevent early sell-offs. Crucially, I analyze the token utility: does the token actually have a use case within the ecosystem, or does it exist solely to raise funds? Poorly designed tokenomics is a common driver of market cap collapse. In addition, I check whether DEX trading pairs have sufficient and locked liquidity, as inadequate or unlocked liquidity can make entering or exiting a position extremely difficult due to high slippage, especially after exchange delistings.

- The VC Exit Trap: I check who the backers are. If a project has "Tier 1 VCs" who got their tokens at a 90%+ discount, they are often looking for retail investors to be their "exit liquidity." I look for fair launch dynamics or long vesting cliffs.

- Roadmap Integrity: I check if the team is actually delivering on its promises. If the roadmap says "Launching a DEX in Q1," but the GitHub repository shows no meaningful developer commits in months, it’s likely that they may be lying.

- Resource Allocation: I assess the team size relative to the project’s stated goals. If a project promises a large ecosystem that would typically require ten or more engineers but operates with only two team members while allocating substantial resources to influencers and marketing, this raises serious concerns about priorities and delivery capacity. It is also important to note that engineers only cover the software development aspect. Leadership, marketing and communications, community management, business development, and other operational functions are equally critical.

Red Flags: A whitepaper filled with AI generated technical jargon but no clear business model. A roadmap that is repeatedly delayed while GitHub remains inactive. Adding trendy keywords such as AI, Metaverse, or RWA to the project name without any real technological backing. A token with no clear utility beyond “governance” for a product that does not yet exist. A very small team making outsized promises while allocating most of the budget to Key Opinion Leaders and media exposure instead of development. Claims of onboarding millions of users while blockchain explorers show only around 100 daily active users. Extraordinary promises paired with a shrinking team of only two members, no active hiring, or fake job listings that remain open for more than six months without progress are also red flags.

Understanding the limitations: We have to be fair here. A Social Audit is not a crystal ball, and it is not a verdict. It highlights behavioral and structural risk signals, but it can still miss critical details due to limited public information, sophisticated deception, or simple human error. It works best when combined with code audits, basic operational checks, and ongoing monitoring.

Not every failure is a sudden collapse. Some teams remain publicly active while gradually extracting value over time through steady selling. This creates a "slow bleed" rather than a single breaking point. This is why continuous monitoring of price, liquidity, and on-chain behavior matters. Experienced Crypto OG social auditors often connect these dots early and flag such rug-pull patterns in audit reviews.

Another challenge is detecting private arrangements. To reduce blind spots, we use community cross-checks and baseline contributor criteria, such as minimum wallet age and a burned registration fee. This keeps evaluations aligned with the goal of making crypto safer rather than chasing external incentives. At OGAudit, every independent audit also includes a visible price stamp and remains permanently published along with the review. Auditors build their reputation over time or damage it through poor work. As the Chief OG of OGAudit, I will ensure these guidelines are enforced and that every social auditor follows a professional, unbiased, and honest approach.

We strictly follow our Community Guidelines. We remind contributors that anyone can become a social auditor on OGAudit, but maintaining that status requires consistency and accountability. We will also publish quarterly transparency reports to show progress and provide clarity.

Closing Thoughts and What’s Next

The Crypto Social Audit is not just theory; it is a methodology built on my 15 years of experience in IT, marketing, and education, combined with 6 years deep in the crypto trenches. At OGAudit, we use this experience to provide the meaningful market comparisons that code audits alone cannot offer. We focus on human variables, the value proposition, and the team’s intent, factors that remain a critical, yet often missing, part of due diligence.

Our goal is to raise awareness about these risks and help participants understand the precautions they can take to navigate Web3 safely. Before you decide, check what Crypto OGs are saying about your next favorite project. DYOR carefully.

The OG Score (Trust Score): Turning Theory into Data

It does not end here. We have distilled our three pillars into 6 core metrics that form the foundation of the OG Score (Trust Score) for coins. We use these metrics to score projects during a social audit, explaining the outcome in a clear, simplified format.

There in the next article, we explain the six core metrics in detail and show how independent, experienced crypto auditors in the OGAudit community use them to assess a project’s strengths and risks at a glance.